python中文分词 词频统计

爱吃糖的月妖妖 人气:1提示:文章写完后,目录可以自动生成,如何生成可参考右边的帮助文档

前言

本文记录了一下Python在文本处理时的一些过程+代码

一、文本导入

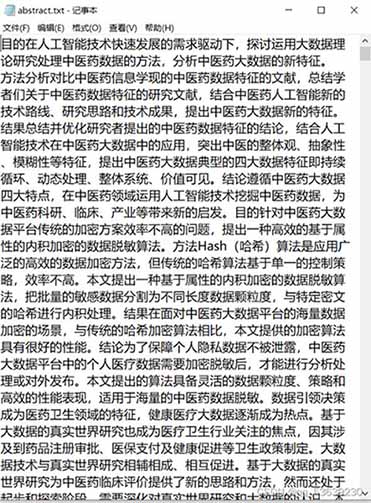

我准备了一个名为abstract.txt的文本文件

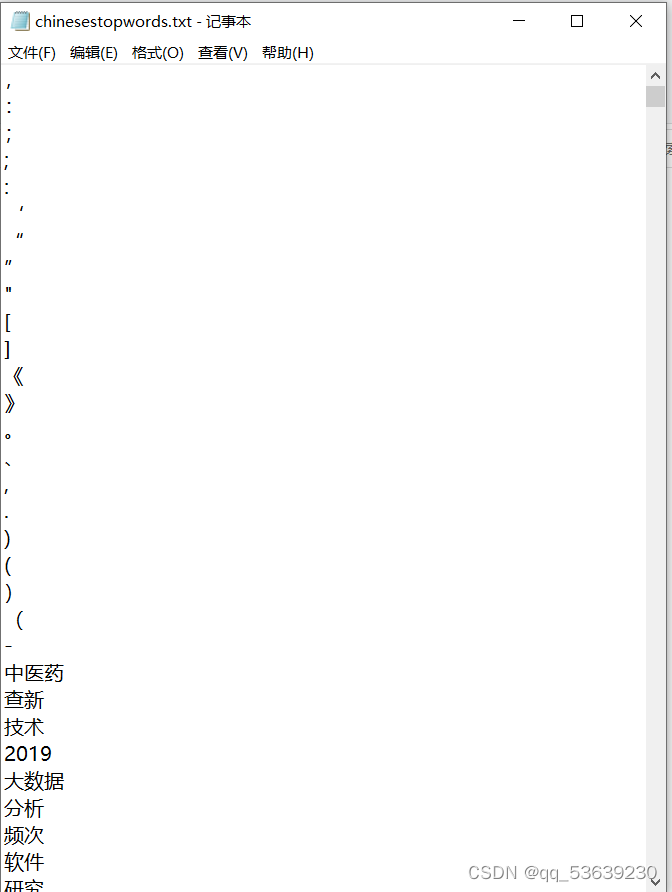

接着是在网上下载了stopword.txt(用于结巴分词时的停用词)

有一些是自己觉得没有用加上去的

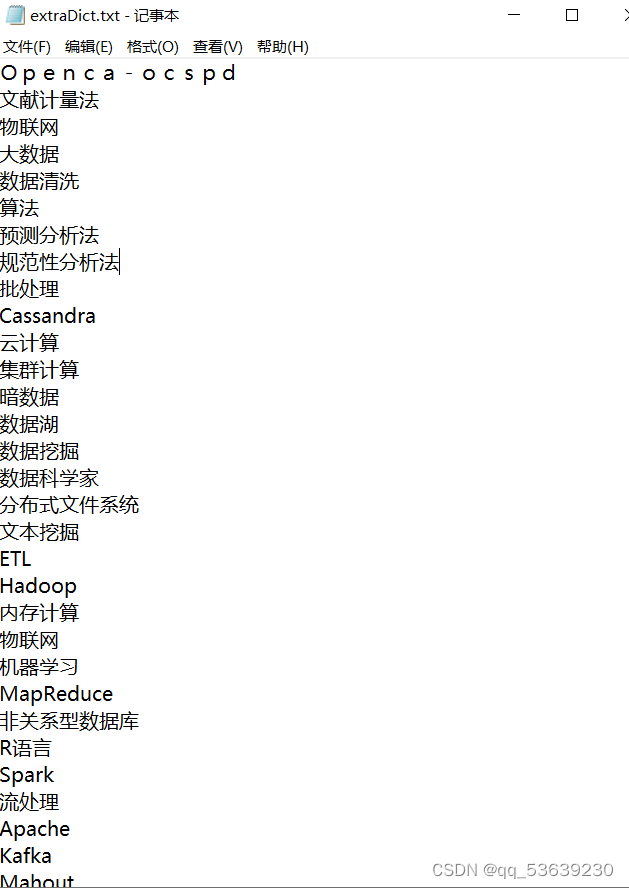

另外建立了自己的词典extraDict.txt

准备工作做好了,就来看看怎么使用吧!

二、使用步骤

1.引入库

代码如下:

import jieba from jieba.analyse import extract_tags from sklearn.feature_extraction.text import TfidfVectorizer

2.读入数据

代码如下:

jieba.load_userdict('extraDict.txt') # 导入自己建立词典3.取出停用词表

def stopwordlist():

stopwords = [line.strip() for line in open('chinesestopwords.txt', encoding='UTF-8').readlines()]

# ---停用词补充,视具体情况而定---

i = 0

for i in range(19):

stopwords.append(str(10 + i))

# ----------------------

return stopwords4.分词并去停用词(此时可以直接利用python原有的函数进行词频统计)

def seg_word(line):

# seg=jieba.cut_for_search(line.strip())

seg = jieba.cut(line.strip())

temp = ""

counts = {}

wordstop = stopwordlist()

for word in seg:

if word not in wordstop:

if word != ' ':

temp += word

temp += '\n'

counts[word] = counts.get(word, 0) + 1#统计每个词出现的次数

return temp #显示分词结果

#return str(sorted(counts.items(), key=lambda x: x[1], reverse=True)[:20]) # 统计出现前二十最多的词及次数5. 输出分词并去停用词的有用的词到txt

def output(inputfilename, outputfilename):

inputfile = open(inputfilename, encoding='UTF-8', mode='r')

outputfile = open(outputfilename, encoding='UTF-8', mode='w')

for line in inputfile.readlines():

line_seg = seg_word(line)

outputfile.write(line_seg)

inputfile.close()

outputfile.close()

return outputfile6.函数调用

if __name__ == '__main__':

print("__name__", __name__)

inputfilename = 'abstract.txt'

outputfilename = 'a1.txt'

output(inputfilename, outputfilename)7.结果

![]()

附:输入一段话,统计每个字母出现的次数

先来讲一下思路:

例如给出下面这样一句话

Love is more than a word

it says so much.

When I see these four letters,

I almost feel your touch.

This is only happened since

I fell in love with you.

Why this word does this,

I haven’t got a clue.

那么想要统计里面每一个单词出现的次数,思路很简单,遍历一遍这个字符串,再定义一个空字典count_dict,看每一个单词在这个用于统计的空字典count_dict中的key中存在否,不存在则将这个单词当做count_dict的键加入字典内,然后值就为1,若这个单词在count_dict里面已经存在,那就将它对应的键的值+1就行

下面来看代码:

#定义字符串

sentences = """ # 字符串很长时用三个引号

Love is more than a word

it says so much.

When I see these four letters,

I almost feel your touch.

This is only happened since

I fell in love with you.

Why this word does this,

I haven't got a clue.

"""

#具体实现

# 将句子里面的逗号去掉,去掉多种符号时请用循环,这里我就这样吧

sentences=sentences.replace(',','')

sentences=sentences.replace('.','') # 将句子里面的.去掉

sentences = sentences.split() # 将句子分开为单个的单词,分开后产生的是一个列表sentences

# print(sentences)

count_dict = {}

for sentence in sentences:

if sentence not in count_dict: # 判断是否不在统计的字典中

count_dict[sentence] = 1

else: # 判断是否不在统计的字典中

count_dict[sentence] += 1

for key,value in count_dict.items():

print(f"{key}出现了{value}次")

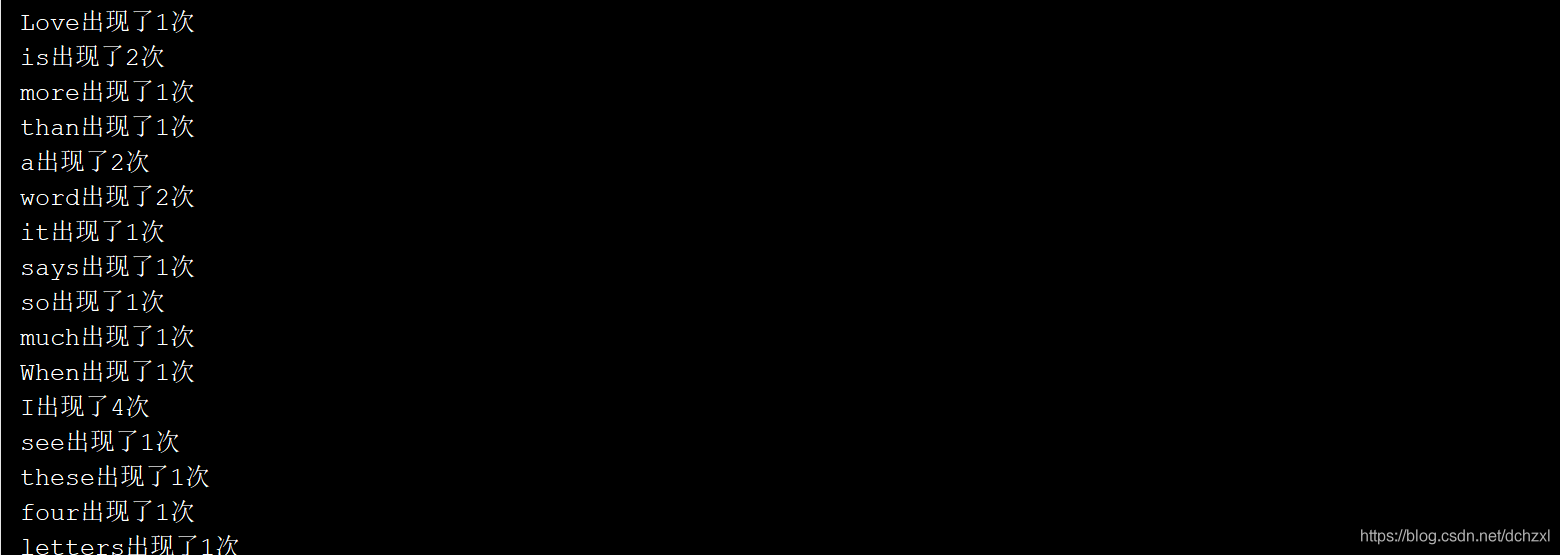

输出结果是这样:

总结

以上就是今天要讲的内容,本文仅仅简单介绍了python的中文分词及词频统计!

加载全部内容