Python结合Sprak实现计算曲线与X轴上方的面积

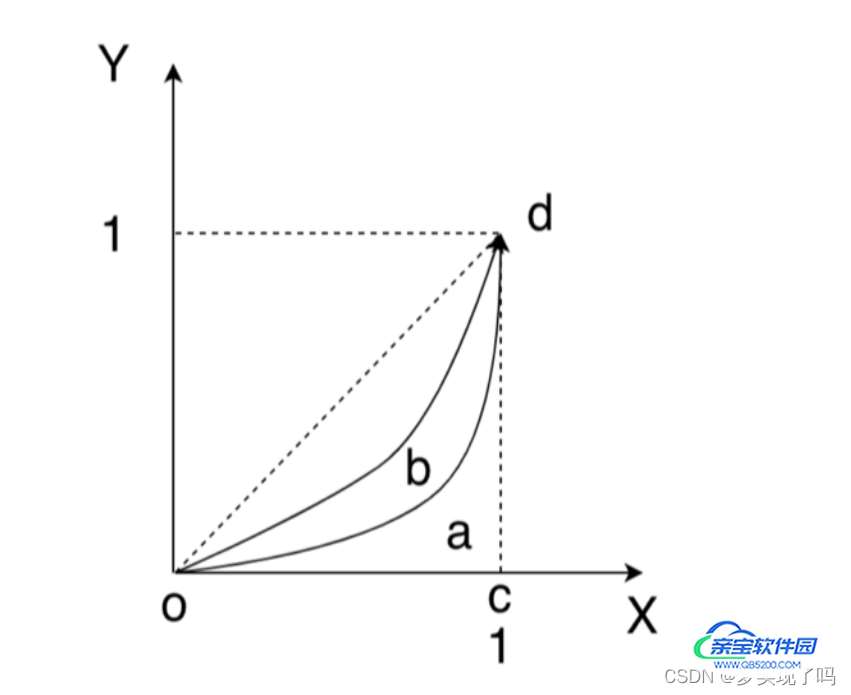

梦实现了吗 人气:0有n组标本(1, 2, 3, 4), 每组由m个( , , ...)元素( , )组成(m值不定), . 各组样本的分布 曲线如下图所示. 通过程序近似实现各曲线与oc, cd直线围成的⾯积.

思路

可以将图像分成若干个梯形,每个梯形的底边长为(Xn+1 - Xn-1),面积为矩形的一半,其面积 = (底边长 X 高)/2,即S = (Xn+1 - Xn-1) * (Yn+1 + Yn+2),对于整个图形,面积为所有梯形面积之和。

[图片]求曲线与其下方x轴的面积,本质上是一个求积分的过程。可以对所有点进行积分,可以调用np.tapz(x, y)来求

代码

"""Calculate the area between the coordinates and the X-axis

"""

import typing

from pandas import read_parquet

def calc_area(file_name: str) -> typing.Any:

"""⾯积计算.

Args:

file_name: parquet⽂件路径, eg: data.parquet

Returns:

计算后的结果

"""

res = []

# Load data from .parquet

initial_data = read_parquet(file_name)

# Get number of groups

group_numbers = initial_data["gid"].drop_duplicates().unique()

# Loop through the results for each group

for i in group_numbers:

data = initial_data[initial_data["gid"] == i]

data = data.reset_index(drop=True)

# Extract the list of x\y

x_coordinates = data["x"]

y_coordinates = data["y"]

# Calculate area between (x[i], y[i]) and (x[i+1], y[i+1])

rect_areas = [

(x_coordinates[i + 1] - x_coordinates[i])

* (y_coordinates[i + 1] + y_coordinates[i])

/ 2

for i in range(len(x_coordinates) - 1)

]

# Sum the total area

result = sum(rect_areas)

res.append(result)

# Also we can use np for convenience

# import numpy as np

# result_np = np.trapz(y_coordinates, x_coordinates)

return res

calc_area("./data.parquet")或者使用pyspark

"""Calculate the area between the coordinates and the X-axis

"""

import typing

from pyspark.sql import Window

from pyspark.sql.functions import lead, lit

from pyspark.sql import SparkSession

def calc_area(file_name: str) -> typing.Any:

"""⾯积计算.

Args:

file_name: parquet⽂件路径, eg: data.parquet

Returns:

计算后的结果

"""

res = []

# Create a session with spark

spark = SparkSession.builder.appName("Area Calculation").getOrCreate()

# Load data from .parquet

initial_data = spark.read.parquet(file_name, header=True)

# Get number of groups

df_unique = initial_data.dropDuplicates(subset=["gid"]).select("gid")

group_numbers = df_unique.collect()

# Loop through the results for each group

for row in group_numbers:

# Select a set of data

data = initial_data.filter(initial_data["gid"] == row[0])

# Adds a column of delta_x to the data frame representing difference

# from the x value of an adjacent data point

window = Window.orderBy(data["x"])

data = data.withColumn("delta_x", lead("x").over(window) - data["x"])

# Calculated trapezoidal area

data = data.withColumn(

"trap",

(

data["delta_x"]

* (data["y"] + lit(0.5) * (lead("y").over(window) - data["y"]))

),

)

result = data.agg({"trap": "sum"}).collect()[0][0]

res.append(result)

return res

calc_area("./data.parquet")提高计算的效率

- 可以使用更高效的算法,如自适应辛普森方法或者其他更快的积分方法

- 可以在数据上进行并行化处理,对pd DataFrame\spark DataFrame进行分区并使用分布式计算

- 在使用spark的时候可以为window操作制定分区来提高性能

- 以下为与本例无关的笼统的提高效率的方法

并行计算:使用多核CPU或分布式计算系统,将任务分解成多个子任务并行处理。

数据压缩:压缩大数据以减少存储空间和带宽,加快读写速度。

数据分块:对大数据进行分块处理,可以减小内存需求并加快处理速度。

缓存优化:优化缓存策略,减少磁盘访问和读取,提高计算效率。

算法优化:使用高效率的算法,比如基于树的算法和矩阵算法,可以提高计算效率。

加载全部内容